Kubernetes (K8s) is an open-source project by Google. Kubernetes is an open-souce platform to manage and orchestrate the containerized applications. Kubernetes supports continuous integration and delivery of applications. It is known as container platform, microservices platform, portable cloud platform and lot more.

Since Docker is limited to the single machine. If you need more than one machines that is cluster and you are working on container platform then Kubernetes is the best option for you! Speaking of Docker, Kubernetes works great with container tools such as Docker. It also supports rkt.

Docker swarm also provides container–centric environment like kubernetes does. But why anybody should go with Kubernetes is, it is definitely more flexible and powerful.

Further there are steps to create K8s cluster. In K8s cluster, we typically have master node and many number of nodes which we call them minions. We manage cluster from master by using kubeadm, kubectl.

The following components contribute in the K8s cluster:

1. kube-apiserver: Component on the master that exposes the Kubernetes API.

2. etcd: It is key-value store for backing up all cluster data.

3. kube-scheduler: This component provisions the pods and assigns the nodes to pods if pods don’t have node assigned to run on.

4. kube-controller-manager: This component runs controllers such as node controller, replication controller, service accounts and token controller, endpoints controller.

5. kube-proxy: This component enables the Kubernetes service abstraction by maintaining network rules on the host and performing connection forwarding.

6. kubeadm: Kubeadm is a tool which helps you to build up K8s cluster.

7. kubectl: Command Line Interface for Kubernetes.

8. kubelet: It is a tool which is responsible for creating, starting and deleting containers which runs on every minion. It communicates with Docker to supervise the process of creating, starting and deleting containers.

9. pods: The basic definition of pod is, collection of containers. Pods are deployed on minion.

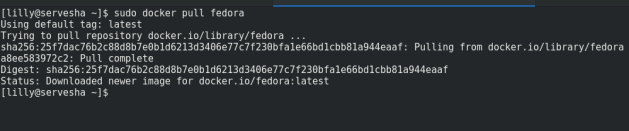

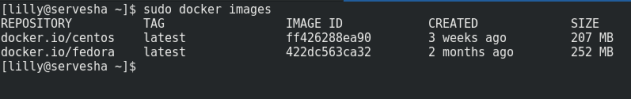

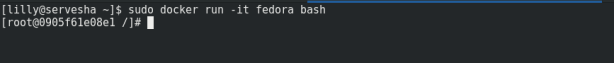

Steps to create Kubernetes Cluster on Fedora:

1. First setup your DNS server configuration if you haven’t already.

Make an entry of Hostname and IP address of master node and nodes (minions) in the /etc/hosts file of both master node and node.

192.168.100.10 kube-master 192.168.100.20 kube-minion1

2. Stop and Disable the Firewalld Service and SElinux settings on both master node and nodes.

# systemctl stop firewalld.service && systemctl disable firewalld.service

# setenforce 0

3. Setup the Kubernetes repository on both master node and minions.

create kubernetes.repo file under the path /etc/yum.repos.d/

[kubernetes]

name=kubernetes

baseurl=https://packages.cloud.google.com/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://packages.cloud.google.com/yum/doc/yum-key.gpg

https://packages.cloud.google.com/yum/doc/rpm-package-key.gpg

4. Install kubeadm and Docker.

After configuring K8s repository execute following commands to install kubeadm (version 1.9.3) and Docker (version 1.13) on both nodes. Start and enable kubelet and docker daemon.

# dnf install kubeadm docker -y

# systemctl start kubelet && systemctl enable kubelet

# systemctl start docker && systemctl enable docker

5. Initialize Kubernetes master.

By firing below command kubeadm starts and sets up the kubernetes master.

# kubeadm init [init] Using Kubernetes version: v1.9.3 [init] Using Authorization modes: [Node RBAC] [preflight] Running pre-flight checks. [WARNING FileExisting-crictl]: crictl not found in system path [certificates] Generated ca certificate and key. [certificates] Generated apiserver certificate and key. [certificates] apiserver serving cert is signed for DNS names [master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.122.202] [certificates] Generated apiserver-kubelet-client certificate and key. [certificates] Generated sa key and public key. [certificates] Generated front-proxy-ca certificate and key. [certificates] Generated front-proxy-client certificate and key. [certificates] Valid certificates and keys now exist in "/etc/kubernetes/pki" [kubeconfig] Wrote KubeConfig file to disk: "admin.conf" [kubeconfig] Wrote KubeConfig file to disk: "kubelet.conf" [kubeconfig] Wrote KubeConfig file to disk: "controller-manager.conf" [kubeconfig] Wrote KubeConfig file to disk: "scheduler.conf" [controlplane] Wrote Static Pod manifest for component kube-apiserver to "/etc/kubernetes/manifests/kube-apiserver.yaml" [controlplane] Wrote Static Pod manifest for component kube-controller-manager to "/etc/kubernetes/manifests/kube-controller-manager.yaml" [controlplane] Wrote Static Pod manifest for component kube-scheduler to "/etc/kubernetes/manifests/kube-scheduler.yaml" [controlplane] Wrote Static Pod manifest for component kube-controller-manager to "/etc/kubernetes/manifests/kube-controller-manager.yaml" [controlplane] Wrote Static Pod manifest for component kube-scheduler to "/etc/kubernetes/manifests/kube-scheduler.yaml" [etcd] Wrote Static Pod manifest for a local etcd instance to "/etc/kubernetes/manifests/etcd.yaml" [init] Waiting for the kubelet to boot up the control plane as Static Pods from directory "/etc/kubernetes/manifests". [init] This might take a minute or longer if the control plane images have to be pulled. [apiclient] All control plane components are healthy after 206.004414 seconds [uploadconfig] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace [markmaster] Will mark node master as master by adding a label and a taint [markmaster] Master master tainted and labelled with key/value: node-role.kubernetes.io/master="" [bootstraptoken] Using token: ed97cd.b299e938a599b1cf [bootstraptoken] Configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials [bootstraptoken] Configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token [bootstraptoken] Configured RBAC rules to allow certificate rotation for all node client certificates in the cluster [bootstraptoken] Creating the "cluster-info" ConfigMap in the "kube-public" namespace [addons] Applied essential addon: kube-dns [addons] Applied essential addon: kube-proxy Your Kubernetes master has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME/.kube sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config sudo chown $(id -u):$(id -g) $HOME/.kube/config You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ You can now join any number of machines by running the following on each node as root: kubeadm join --token ed97cd.b299e938a599b1cf 192.168.122.202:6443 --discovery-token-ca-cert-hash sha256:ca448b9562b26ede20ce987a9ef09c9912b8112153a94dd163847dafe464973b

We have started the master node but how the master will know what are his nodes/minions. kubeadm generates token in order to communicate with the minions. We need to pass this token on every minion by running below command. This token is generated when we execute ‘kubeadm init‘ command.

# kubeadm join --token ed97cd.b299e938a599b1cf 192.168.122.202:6443 --discovery-token-ca-cert-hash sha256:ca448b9562b26ede20ce987a9ef09c9912b8112153a94dd163847dafe464973b [preflight] Running pre-flight checks. [WARNING Service-Kubelet]: kubelet service is not enabled, please run 'systemctl enable kubelet.service' [WARNING Service-Docker]: docker service is not enabled, please run 'systemctl enable docker.service' [WARNING FileExisting-crictl]: crictl not found in system path [discovery] Trying to connect to API Server "192.168.122.190:6443" [discovery] Created cluster-info discovery client, requesting info from "https://192.168.122.190:6443" [discovery] Requesting info from "https://192.168.122.190:6443" again to validate TLS against the pinned public key [discovery] Cluster info signature and contents are valid and TLS certificate validates against pinned roots, will use API Server "192.168.122.190:6443" [discovery] Successfully established connection with API Server "192.168.122.190:6443" This node has joined the cluster: * Certificate signing request was sent to master and a response was received. * The Kubelet was informed of the new secure connection details. Run 'kubectl get nodes' on the master to see this node join the cluster.

After initializing Kubernetes master, execute below commands to access cluster as a root user.

# mkdir -p $HOME/.kube

# cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

# chown $(id -u):$(id -g) $HOME/.kube/config

On master, run below command to see if the nodes are ready or not.

# kubectl get nodes NAME STATUS ROLES AGE VERSION master NotReady master 14m v1.9.3 node NotReady <none> 12m v1.9.3

To know the status of containers, run below command,

# kubectl get pods --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-system etcd-master 1/1 Running 0 8m kube-system kube-apiserver-master 1/1 Running 1 7m kube-system kube-controller-manager-master 1/1 Running 0 7m kube-system kube-dns-6f4fd4bdf-jfvxs 0/3 Pending 0 7m kube-system kube-proxy-9r5ld 1/1 Running 0 7m kube-system kube-proxy-dh4h9 1/1 Running 0 7m kube-system kube-scheduler-master 1/1 Running 0 8m

Right now, the status of nodes is ‘NotReady’. To make cluster ready we need to deploy network. You have many options for deploying pod network such as Flannel, Weave Net, Calico, Romana, kube-Router, Canal. In this article we are using Weave Net.

6. Deploy pod network.

# export kubever=$(kubectl version | base64 | tr -d '\n')

# kubectl apply -f "https://cloud.weave.works/k8s/net?k8s-version=$kubever" serviceaccount "weave-net" created clusterrole "weave-net" created clusterrolebinding "weave-net" created role "weave-net" created rolebinding "weave-net" created daemonset "weave-net" created

Now try to know status of nodes and pods. Nodes should be ready and pods should be running after deploying the pod network.

You can watch the events of Kubernetes cluster by below command,

# kubectl get events -w

We have our pod network ready. Let’s check the status of pods and nodes again on master.

# kubectl get nodes NAME STATUS ROLES AGE VERSION master Ready master 14m v1.9.3 node Ready <none> 12m v1.9.3

# kubectl get pods --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-system etcd-master 1/1 Running 0 4m kube-system kube-apiserver-master 1/1 Running 1 4m kube-system kube-controller-manager-master 1/1 Running 0 4m kube-system kube-dns-6f4fd4bdf-jfvxs 3/3 Running 0 17m kube-system kube-proxy-9r5ld 1/1 Running 0 17m kube-system kube-proxy-dh4h9 1/1 Running 0 17m kube-system kube-scheduler-master 1/1 Running 0 4m kube-system weave-net-kkp8t 2/2 Running 0 7m kube-system weave-net-nw66p 2/2 Running 0 7m

Your Kubernetes cluster is ready and pods are successfully running. The very next step is to deploy something !